THE SOLUTION

WeCounterHate stops the spread of hate speech, one retweet at a time.

First, AI helps identify tweets containing hate speech. Once identified, they’re tagged with a reply. This permanent marker lets those looking to spread hate know that hitting retweet will commit a donation to a nonprofit that fights for inclusion, diversity and equality.

Technology may have given hate a new way to spread.

But technology gave us the power to stop it.

Examples of actual hate speech countered by the WeCounterHate AI platform:

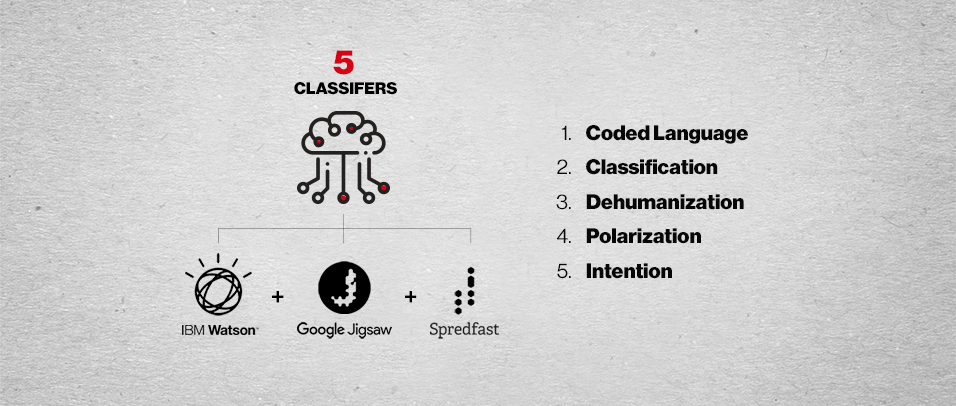

We created 5 proprietary classifiers then wove together three discrete technologies into a new AI platform that classifies and rates the toxicity of hate.

You can’t stop what you don’t understand.

The first key to countering hate speech is to have a clear definition of what it is.

We adapted Dr. Gregory Staunton’s 10 Stages of Genocide to create a structure for hate speech identification. Originally presented in a briefing to the U.S. Department of State in 1996, the report helped us understand the process of classification and dehumanization.

We changed it by condensing the stages and removing some that aren’t relevant to Twitter (e.g. extermination). We also added contemporary phenomena found in social media (e.g. coded language).

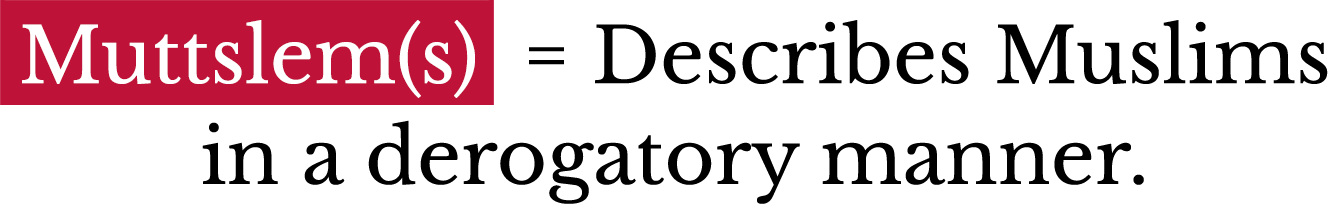

Life After Hate, a non-profit dedicated to reforming those that seek to escape lives of hate, was founded by former hate group members. Their experience made them uniquely qualified to help train the AI. With their knowledge, the AI could find hate speech concealed by coded language.